Summary of Andrej Karpathy’s Intro to LLMs Video

Understanding LLMs is a significant part of being able to use today’s more advanced models. Going back to the basics is always helpful for…

Understanding LLMs is a significant part of being able to use today’s more advanced models. Going back to the basics is always helpful for everyone. Here is a summary I wrote after watching Andrej’s video on the introduction to LLMs. [Prerequisite: Understanding of deep learning basics]

· He begins by explaining what an LLM is in a very simplistic way and the training power it requires. Next, he delves into next-word prediction by a neural network and how the millions of parameters learn from the data you provide before predicting the next word.

· When it comes to next-word predictions, the state-of-the-art (SOTA) models are based on the transformer neural network, where billions of parameters are distributed throughout the network to learn from your data and fine-tune the parameters to improve predictions for any given task. However, due to the black-box nature of neural networks, we don’t truly understand how these parameters collaborate to make predictions.

· Pre-training stage is training on a large number of documents from the internet, about gaining knowledge. Fine-tuning stage: “alignment”; changing the formatting from internet documents to act more like your assistant or whatever app you are building.

· The following screenshot summarizes how to train ChatGPT including the stages involved, the frequency, and the resources required:

· Optional Stage 3 for labeling instructions beyond Pretraining and Finetuning: Reinforcement Learning with Human Feedback (as OpenAI termed it) is another optional stage 3 of fine-tuning. Use comparison labels, since sometimes it is easier to compare the candidate labels instead of generating them. The haiku example is a great one as shown in this video.

· He gave a demo of ChatGPT showing how it works when the user enters their queries, what tools it may use behind the scenes etc.

· Multimodality is getting better with the advanced LLMs and associated models to process data from multiple modes.

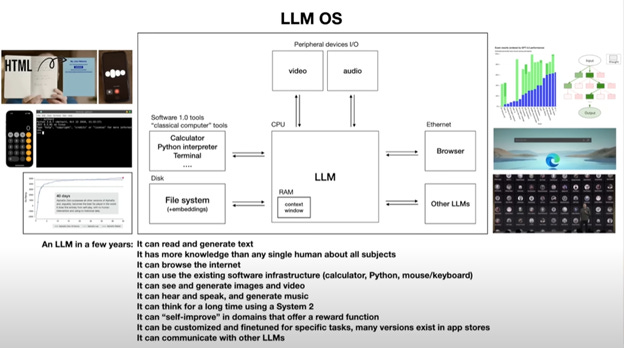

· To think from the computer’s OS perspective for how LLM works, Andrej shared this very interesting workflow and discussed what LLMs may be able to do in the future, as seen in the screenshot below:

· Towards the end, he discussed various security concerns with LLMs like prompt injection, and data poisoning among others.

Overall, the way he explained LLMs, and discussed the prospects as well as the security concerns, will bring you up to speed with the LLM advancements.